AI Will Break School – Unless We Redesign It

On its own, AI will not fix education. In fact, if we don’t change, it may well break it.

Not because AI is inherently harmful, but because it is exposing weaknesses that have existed in our education system for a long time.

For decades, schools have relied on models built for a different era: standardized tasks, compliance-driven work, and assessments that reward correct answers over deep thinking. AI didn’t create these problems, but it is accelerating their detrimental effects. It makes them impossible to ignore.

In that sense, AI is a stress test.

AI Reveals What Was Already Fragile

Today’s AI systems can answer most of the questions we ask students – often with speed, clarity, and sophistication that rivals or exceeds that of advanced, graduate-level learners.

That reality alone destabilizes core assumptions about school.

If even a subset of students use AI to complete their work, traditional grading systems begin to lose meaning. Signals of excellence become harder to distinguish from outputs wholly generated or heavily supported by AI. Over time, this doesn’t just affect individual assignments, but rather it distorts entire systems of ranking, GPA, and academic differentiation.

For colleges and employers, this raises a fundamental question: what do grades actually represent?

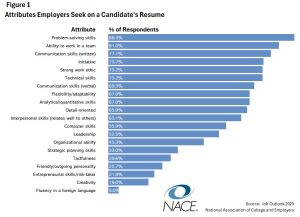

At the same time, the skills most in demand by employers – critical thinking, problem solving, and collaboration – are precisely the ones most at risk if students begin offloading too much of their thinking to AI without judgment or reflection.

AI didn’t introduce these tensions. It just dramatically exposes how dependent our system has been on proxies for learning rather than learning itself.

The False Solutions

Faced with this disruption, many schools are gravitating toward one of two responses. Neither is sufficient.

1. Ban AI

This is not a serious long-term option.

Students are entering a world where AI is embedded in nearly every field. Employers are already prioritizing AI fluency, and many organizations are restructuring work around AI-enabled productivity – as demonstrated by recent layoffs at Square and Meta. At the same time, a majority of students report that their education has not prepared them to use these tools effectively.

To ban AI in schools is to widen that gap – to prepare students for a world that no longer exists.

2. Try to “AI-proof” School

The second response is more subtle: redesign assessments to be AI-resistant, require students to document every step of their thinking, and monitor usage closely.

At first glance, this may seem reasonable. But it risks shifting education further toward surveillance rather than learning.

When the central question becomes “Did you use AI?”, we reframe the classroom around compliance once again. And when students are required to expose every step of their thinking for evaluation, we may unintentionally undermine the very processes we hope to support.

As some researchers like Timothy Cook have argued, there is such a thing as cognitive privacy – a space in which learners can explore, struggle, and revise their thinking without constant scrutiny. When that space disappears, students may become more performative, less open, and less willing to take intellectual risks.

These approaches to “AI-proofing” school may restore a sense of control. But they do not move education forward.

The Deeper Issue

AI is not the root problem. It is simply revealing a deeper one.

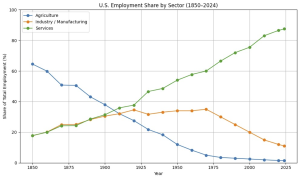

Modern schooling was designed in the 19th and early 20th centuries to meet the needs of an industrializing society – one that valued standardization, efficiency, and compliance. Knowledge was scarce, and schools were structured to distribute it. The workforce was transitioning from agrarian to industrial labor.

That is no longer the world we live in.

Information is now abundant. Service sector employment now comprises nearly 90% of the US economy and much of this work is less routine. Success depends increasingly on the ability to think critically, adapt, collaborate, and make meaning in complex environments.

And yet, much of school still operates on the same underlying logic of 150 years ago.

The consequences are visible. Research shows that student engagement declines sharply from late elementary school through high school. Curiosity gives way to strategy – students learn to optimize for grades rather than understanding. As Deci and Ryan’s work on motivation has shown, environments that reduce autonomy also reduce intrinsic motivation.

At precisely the stage of life when adolescents are developmentally primed to seek meaning, identity, and agency, school typically offers the opposite: more control, less relevance, and fewer opportunities for genuine ownership.

AI did not create this misalignment. It just makes it harder to ignore.

Why This Moment Matters

In the past, the limitations of the system could be managed. Students still had to produce the work themselves. Effort and output were more tightly coupled.

AI breaks that link.

It removes the last structural supports holding together a system built on task completion and answer production. If we continue to define learning in those terms, AI will steadily erode the remaining integrity of the system.

But it also creates an opening.

So, what does it actually mean to design learning that still requires thinking when AI is allowed?

Designing Learning That Still Requires Thinking

If AI can generate answers, then learning can no longer be defined by producing them.

The task must shift.

Not: Can you arrive at the answer?

But:

- Can you frame the right question?

- Can you evaluate and challenge AI outputs?

- Can you apply ideas in novel, real-world contexts?

- Can you make sensible decisions where there is no single correct answer?

- Can you contribute originally to the body of work in your field of interest?

In other words, the goal is not to avoid AI, but to design learning where thinking cannot be outsourced.

From Compliance to Agency

The real question is not whether we should use AI in education. That question has already been answered.

The question is whether we are ready to move from a system of compliance to one of agency.

In a compliance-based system, students are asked to:

- Follow instructions

- Complete assigned tasks

- Optimize for grades

In an agency-based system, students are expected to:

- Make decisions about their learning

- Take responsibility for outcomes

- Engage in work that has meaning beyond the classroom

AI makes this shift both more necessary and more possible.

What Thoughtful Integration Looks Like

Used thoughtfully, AI can support a different kind of learning environment:

- Personalized support, allowing students to get unstuck and move forward

- Deeper inquiry, enabling research, analysis, and synthesis at a higher level

- Real-world connection, helping students engage with authentic problems and audiences

- Expanded creation, allowing students to produce work that reflects genuine understanding

At the same time, research from USC’s CANDLE lab shows that adolescents learn best when their education engages their need to make meaning – through emotionally rich, socially grounded, and intellectually complex experiences. Students who engage in this kind of thinking show measurable differences in both cognitive and psychosocial development.

AI does not automatically create these conditions. But it lowers the barrier to designing for them.

Left unguided, however, AI will default to reinforcing existing patterns – more efficient worksheets, more polished outputs, more didactic teaching, more surface-level learning. Real change requires intentional design.

What This Means for EdTech Builders

If AI is going to reshape learning, it will happen not only through the choices of teachers and schools, but also through the tools the students and teachers use every day.

And right now, many AI-powered tools are optimizing for the wrong outcomes.

They make it easier for students to complete assignments, but not necessarily to think more deeply.

They make it quicker and easier for teachers to handle their administrative tasks, but don’t scaffold them in shifting instructional practice in ways that deepen student engagement, agency, and cognition.

The next generation of learning products must be built around a different question:

How do we design experiences where thinking is required – even when AI is available?

For product leaders building in this space, this is not a philosophical question. It’s a design challenge.

And it’s one that will define which products actually matter in the next era of education.

That means:

- Tools that prompt judgment, not just generation

- Interfaces that support iteration, critique, and revision

- Systems that value decision-making over answer production

The opportunity here is enormous, but only if we choose to design for it.

The Choice Ahead

We cannot pretend that AI does not exist. Nor can we simply layer it casually onto a system that was already under strain.

If we use AI to reinforce compliance – toward product-making over meaning-making – we will accelerate the decline of purposeful learning.

If instead we use it to support agency, we have the opportunity to redesign education around what actually matters: thinking, meaning-making, and human development.

This is not an easy shift. But it is a solvable one with the right design lens.

AI is not the transformation. It is the catalyst.

What happens next depends on what we choose to build.

Sources for this article include:

- BLS Monthly Labor Review (1984)

- BLS datasets of labor statistics and historical census reconstructions around the following benchmark points: 1850, 1860, 1870, 1880, 1890, 1900, 1910, 1920, 1930, 1940, 1952, 1957, 1962, 1967, 1972, 1977, 1979, 1982

- BLS Current Employment Statistics (CES) and Current Population Survey (CPS) – 1980s-2024